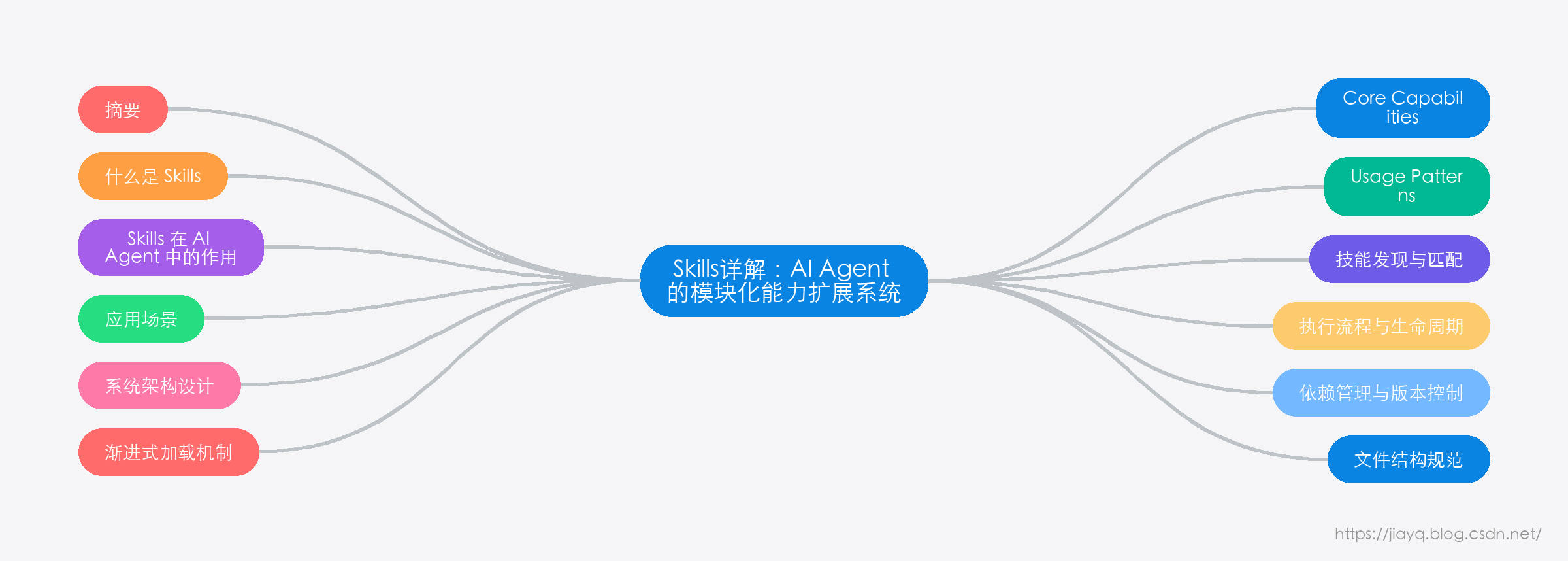

Skills详解:AI Agent 的模块化能力扩展系统

Skills详解:AI Agent 的模块化能力扩展系统

Skills详解:AI Agent 的模块化能力扩展系统

- Skills详解:AI Agent 的模块化能力扩展系统

- 介绍

-

- 什么是 Skills

-

- Skills 的核心价值

- Skills 与传统插件系统的区别

- Skills 在 AI Agent 中的作用

-

- 1. 知识传递载体

- 2. 工具集成指南

- 3. 上下文管理

- 应用场景

-

- 1. 企业级自动化

- 2. 专业领域服务

- 3. 开发工具链

- 原理

-

- 系统架构设计

-

- 核心组件说明

-

- 1. Skills Manager(技能管理器)

- 2. Skill Loader(技能加载器)

- 3. Skill Registry(技能注册表)

- 4. Context Manager(上下文管理器)

- 渐进式加载机制

-

- 三层加载架构

-

- Level 1: 元数据加载(~100 字)

- Level 2: 指令加载(<5K 字)

- Level 3: 资源加载(无限制)

- 加载策略优化

- 技能发现与匹配

-

- 匹配维度

-

- 1. 语义匹配

- 2. 功能匹配

- 3. 优先级匹配

- 匹配算法流程

- 执行流程与生命周期

-

- 完整执行流程

- 技能生命周期管理

-

- 1. 注册阶段

- 2. 激活阶段

- 3. 执行阶段

- 4. 清理阶段

- 依赖管理与版本控制

-

- 依赖关系处理

-

- 1. 技能间依赖

- 2. 系统依赖

- 3. 依赖解析算法

- 版本管理策略

-

- 1. 语义化版本控制

- 2. 版本兼容性矩阵

- 3. 自动更新机制

- 格式

-

- 文件结构规范

-

- 标准目录结构

- 文件命名约定

- SKILL.md 文件格式

-

- 基本结构

- YAML 前置元数据规范

-

- 必需字段

- 可选字段

- 依赖关系配置

- Markdown 内容结构

-

- 推荐章节结构

- 内容编写规范

- 资源文件组织

-

- Scripts 目录

-

- 脚本类型和用途

- 脚本编写规范

- References 目录

-

- 文档类型

- 文档编写示例

- Assets 目录

-

- 资源类型

- 模板文件示例

- 配置验证与最佳实践

-

- 自动验证规则

-

- 1. 元数据验证

- 2. 依赖关系验证

- 3. 文件结构验证

- 开发最佳实践

-

- 1. 技能设计原则

- 2. 性能优化建议

- 3. 维护性考虑

- 实例

-

- 实例一:图片处理技能 (image-processor)

-

- 功能概述

- 技能配置

- 核心功能实现

- 脚本实现

- 实例二:数据分析技能 (data-analyzer)

-

- 功能概述

- 技能配置

- 核心分析能力

- 分析脚本实现

- 实例三:Web 开发技能 (web-builder)

-

- 功能概述

- 技能配置

- 开发能力实现

- Node.js API Template

- 实例四:系统运维技能 (system-admin)

-

- 功能概述

- 技能配置

- 运维脚本实现

- 部署自动化脚本

- 最佳实践

-

- 设计原则

-

- 1. 单一职责原则 (Single Responsibility Principle)

- 2. 自包含原则 (Self-Contained Principle)

- 3. 渐进式复杂度原则 (Progressive Complexity)

- 开发规范

-

- 1. 命名规范

-

- Skill 命名

- 文件命名

- 2. 文档编写规范

-

- 元数据质量标准

- 内容结构标准

- 代码示例标准

- 3. 版本管理规范

-

- 语义化版本控制

- 变更日志维护

- 性能优化

-

- 1. 上下文优化策略

-

- 元数据精简

- 内容分层

- 2. 资源管理优化

-

- 脚本优化

- 资源文件优化

- 3. 依赖管理优化

-

- 智能依赖加载

- 质量保证

-

- 1. 自动化测试策略

-

- 技能验证测试

- 功能测试

- 2. 代码质量标准

-

- 脚本质量检查

- 性能基准测试

- 维护策略

-

- 1. 生命周期管理

-

- 技能生命周期阶段

- 版本支持策略

- 2. 监控和分析

-

- 使用情况监控

- 性能监控

- 3. 社区协作

-

- 贡献指南

- 社区反馈机制

- 安全最佳实践

-

- 1. 安全配置

-

- 敏感信息处理

- 权限最小化

- 2. 输入验证

-

- 参数验证

- 总结

介绍

在人工智能快速发展的今天,AI Agent 的应用场景越来越广泛。然而,通用的 AI 模型往往缺乏特定领域的专业知识和操作流程。如何让 AI Agent 具备专业化的能力,成为了一个重要的技术挑战。

Skills 系统正是为了解决这个问题而设计的。它提供了一种标准化的方式,让开发者可以将专业知识、工具集成和操作流程打包成可复用的模块,从而快速构建具备专业能力的 AI Agent。

什么是 Skills

Skills(技能) 是一种模块化、自包含的能力扩展包,用于为 AI Agent 提供特定领域的专业知识、工作流程和工具集成。可以将 Skills 理解为 AI Agent 的"专业培训教材"——它们将通用的 AI 模型转变为具备特定领域专业能力的专家系统。

Skills 的核心价值

- 专业化能力:将复杂的专业知识和操作流程标准化,让 AI Agent 具备专家级的执行能力

- 模块化设计:每个 Skill 都是独立的模块,可以单独开发、测试和部署

- 可复用性:一次开发,多次使用,大大提高了开发效率

- 标准化接口:统一的配置格式和调用方式,降低了学习和维护成本

Skills 与传统插件系统的区别

| 特性 | 传统插件系统 | Skills 系统 |

|---|---|---|

| 主要用途 | 功能扩展 | 知识和流程传递 |

| 内容形式 | 可执行代码 | 配置文件 + 资源包 |

| 加载方式 | 运行时动态加载 | 按需上下文加载 |

| 适用场景 | 功能增强 | 专业领域指导 |

| 维护成本 | 需要代码维护 | 主要是文档维护 |

Skills 在 AI Agent 中的作用

在现代 AI Agent 架构中,Skills 扮演着"知识库"和"操作手册"的双重角色:

1. 知识传递载体

Skills 将专业领域的知识以结构化的方式组织起来,包括:

- 概念定义:专业术语和概念的准确定义

- 操作流程:标准化的工作流程和最佳实践

- 配置参数:工具和系统的配置方法

- 故障排除:常见问题的解决方案

2. 工具集成指南

Skills 提供了与外部工具和系统集成的标准化方法:

- API 调用:第三方服务的接口调用方式

- 配置管理:系统配置的标准化模板

- 数据处理:数据转换和处理的标准流程

- 错误处理:异常情况的处理机制

3. 上下文管理

Skills 采用渐进式加载机制,有效管理 AI Agent 的上下文:

- 元数据优先:通过简洁的描述快速匹配需求

- 按需加载:只有在需要时才加载详细内容

- 资源分离:将不同类型的资源分类管理

应用场景

Skills 系统在以下场景中表现出色:

1. 企业级自动化

- DevOps 流程:部署、监控、故障处理的标准化流程

- 数据处理:ETL 流程、报表生成、数据分析

- 系统集成:多系统间的数据同步和接口调用

2. 专业领域服务

- 医疗诊断:症状分析、诊断建议、治疗方案

- 法律咨询:法条查询、案例分析、文书生成

- 财务分析:财务报表分析、风险评估、投资建议

3. 开发工具链

- 代码生成:基于模板的代码自动生成

- 测试自动化:测试用例生成、测试执行、结果分析

- 文档生成:API 文档、用户手册、技术规范

通过 Skills 系统,开发者可以快速构建专业化的 AI Agent,大大提高了 AI 在特定领域的应用效果和用户体验。

在接下来的章节中,我们将深入探讨 Skills 的工作原理、配置格式,并通过具体实例展示如何开发和使用 Skills。

原理

理解 Skills 系统的工作原理,是有效开发和使用 Skills 的基础。本章将从架构设计、加载机制、执行流程等多个维度,深入分析 Skills 系统的技术实现。

系统架构设计

Skills 系统采用分层架构设计,确保了系统的可扩展性和维护性:

Resource Types

Skills Storage Layer

Skills Management Layer

AI Agent Layer

AI Agent Core

Skills Manager

Skill Loader

Skill Registry

Context Manager

Skill Metadata

Skill Instructions

Bundled Resources

Scripts

References

Assets

核心组件说明

1. Skills Manager(技能管理器)

- 职责:统筹管理所有 Skills 的生命周期

- 功能:技能发现、加载控制、执行协调

- 特点:作为 AI Agent 与 Skills 系统的主要接口

2. Skill Loader(技能加载器)

- 职责:负责 Skills 的解析和加载

- 功能:YAML 元数据解析、Markdown 内容处理、资源文件管理

- 特点:支持渐进式加载,优化上下文使用

3. Skill Registry(技能注册表)

- 职责:维护所有已注册 Skills 的索引

- 功能:技能查找、匹配、版本管理

- 特点:提供快速的技能检索和筛选能力

4. Context Manager(上下文管理器)

- 职责:管理 Skills 在 AI Agent 上下文中的加载和卸载

- 功能:上下文优化、内存管理、资源清理

- 特点:确保上下文的高效利用

渐进式加载机制

Skills 系统的核心创新在于其渐进式加载机制,这种设计有效解决了上下文窗口的限制问题:

三层加载架构

否

是

否

是

用户请求

技能匹配

Level 1: 元数据加载

是否匹配?

尝试下一个技能

Level 2: 指令加载

需要更多资源?

执行任务

Level 3: 按需资源加载

Level 1: 元数据加载(~100 字)

name: "image-processor"

description: "Process and manipulate images including rotation, resizing, format conversion, and quality optimization"

特点:

- 始终保持在上下文中

- 用于快速技能匹配

- 包含技能的核心标识信息

Level 2: 指令加载(<5K 字)

# Image Processing Skill

## Core Capabilities

- Image rotation and flipping

- Size adjustment and cropping

- Format conversion (JPEG, PNG, WebP)

- Quality optimization

## Usage Patterns

When users request image manipulation tasks, follow these steps:

1. Analyze the input image format and properties

2. Determine the required operations

3. Execute operations in optimal sequence

4. Validate output quality

特点:

- 技能被触发时加载

- 包含核心操作指南

- 提供标准化的工作流程

Level 3: 资源加载(无限制)

- Scripts:可执行脚本,支持直接执行而无需加载到上下文

- References:详细文档,按需加载到上下文

- Assets:模板文件,用于输出生成

加载策略优化

- 智能预加载:基于使用频率和依赖关系预加载相关技能

- 缓存机制:对频繁使用的技能内容进行缓存

- 动态卸载:自动卸载长时间未使用的技能内容

- 压缩存储:对大型资源文件进行压缩存储

技能发现与匹配

Skills 系统采用多维度匹配算法,确保能够准确找到最适合的技能:

匹配维度

1. 语义匹配

- 关键词匹配:基于技能描述中的关键词

- 同义词扩展:支持同义词和相关术语匹配

- 上下文理解:结合对话上下文进行语义理解

2. 功能匹配

- 能力标签:基于技能声明的功能标签

- 输入输出类型:匹配期望的输入输出格式

- 复杂度评估:根据任务复杂度选择合适的技能

3. 优先级匹配

- 使用频率:优先选择使用频率高的技能

- 成功率:基于历史执行成功率进行排序

- 用户偏好:学习用户的使用偏好

匹配算法流程

def match_skills(user_request, available_skills):

"""

技能匹配算法示例

"""

candidates = []

for skill in available_skills:

score = 0

# 语义匹配评分

semantic_score = calculate_semantic_similarity(

user_request, skill.description

)

score += semantic_score * 0.4

# 功能匹配评分

capability_score = match_capabilities(

extract_requirements(user_request),

skill.capabilities

)

score += capability_score * 0.3

# 历史表现评分

performance_score = get_historical_performance(skill.id)

score += performance_score * 0.3

candidates.append((skill, score))

# 按评分排序并返回最佳匹配

candidates.sort(key=lambda x: x[1], reverse=True)

return candidates[:3] # 返回前3个候选技能

执行流程与生命周期

完整执行流程

Skill Registry

Skill Loader

Skills Manager

AI Agent

User

Skill Registry

Skill Loader

Skills Manager

AI Agent

User

发送请求

分析技能需求

查询匹配技能

返回候选技能列表

加载最佳匹配技能

返回技能内容

提供技能指导

执行任务

返回结果

报告执行状态

更新技能统计

技能生命周期管理

1. 注册阶段

def register_skill(skill_path):

"""注册新技能到系统"""

# 验证技能格式

validate_skill_format(skill_path)

# 解析元数据

metadata = parse_skill_metadata(skill_path)

# 检查依赖关系

check_dependencies(metadata.dependencies)

# 注册到技能注册表

skill_registry.register(metadata)

# 建立索引

build_search_index(metadata)

2. 激活阶段

def activate_skill(skill_id, context):

"""激活技能并加载到上下文"""

# 加载技能指令

instructions = skill_loader.load_instructions(skill_id)

# 注入上下文

context.inject_skill_knowledge(instructions)

# 准备资源访问接口

setup_resource_access(skill_id, context)

return context

3. 执行阶段

def execute_with_skill(skill_id, task, context):

"""在技能指导下执行任务"""

# 获取技能指导

guidance = get_skill_guidance(skill_id, task)

# 执行任务

result = execute_task(task, guidance, context)

# 记录执行结果

log_execution_result(skill_id, task, result)

return result

4. 清理阶段

def cleanup_skill(skill_id, context):

"""清理技能相关资源"""

# 从上下文移除技能内容

context.remove_skill_knowledge(skill_id)

# 清理临时资源

cleanup_temporary_resources(skill_id)

# 更新使用统计

update_usage_statistics(skill_id)

依赖管理与版本控制

依赖关系处理

Skills 系统支持复杂的依赖关系管理:

1. 技能间依赖

# skill.yaml

dependencies:

required:

- "file-processor:^1.0.0"

- "image-optimizer:>=2.1.0"

optional:

- "cloud-storage:*"

2. 系统依赖

system_requirements:

python: ">=3.8"

node: ">=14.0"

tools:

- "imagemagick"

- "ffmpeg"

3. 依赖解析算法

def resolve_dependencies(skill_metadata):

"""解析技能依赖关系"""

dependency_graph = build_dependency_graph(skill_metadata)

# 检查循环依赖

if has_circular_dependency(dependency_graph):

raise CircularDependencyError()

# 拓扑排序确定加载顺序

load_order = topological_sort(dependency_graph)

# 版本兼容性检查

validate_version_compatibility(load_order)

return load_order

版本管理策略

1. 语义化版本控制

- 主版本号:不兼容的 API 变更

- 次版本号:向后兼容的功能性新增

- 修订号:向后兼容的问题修正

2. 版本兼容性矩阵

compatibility_matrix:

"1.0.x": ["1.0.0", "1.0.1", "1.0.2"]

"1.1.x": ["1.1.0", "1.1.1"]

"2.0.x": ["2.0.0"]

3. 自动更新机制

def check_skill_updates():

"""检查技能更新"""

for skill in registered_skills:

latest_version = get_latest_version(skill.id)

if version_compare(latest_version, skill.version) > 0:

if is_compatible_update(skill.version, latest_version):

schedule_update(skill.id, latest_version)

通过这种精心设计的架构和机制,Skills 系统能够高效、可靠地为 AI Agent 提供专业化能力扩展,同时保持良好的可维护性和扩展性。

格式

Skills 的标准化格式是系统高效运行的基础。本章详细介绍 Skills 的文件结构、配置语法和内容组织规范,帮助开发者创建符合标准的高质量 Skills。

文件结构规范

标准目录结构

每个 Skill 都应该遵循以下标准目录结构:

skill-name/

├── SKILL.md # 核心技能文件(必需)

├── scripts/ # 可执行脚本目录(可选)

│ ├── process_data.py # Python 脚本示例

│ ├── deploy.sh # Shell 脚本示例

│ └── utils.js # JavaScript 工具脚本

├── references/ # 参考文档目录(可选)

│ ├── api_docs.md # API 文档

│ ├── schemas.json # 数据模式定义

│ └── troubleshooting.md # 故障排除指南

└── assets/ # 资源文件目录(可选)

├── templates/ # 模板文件

├── icons/ # 图标资源

└── configs/ # 配置文件模板

文件命名约定

| 文件类型 | 命名规范 | 示例 |

|---|---|---|

| 技能目录 | kebab-case |

image-processor, data-analyzer

|

| 核心文件 | 固定名称 | SKILL.md |

| 脚本文件 | snake_case |

process_image.py, backup_data.sh

|

| 文档文件 | kebab-case |

api-reference.md, user-guide.md

|

| 配置文件 | kebab-case |

database-config.json, server-settings.yaml

|

SKILL.md 文件格式

基本结构

SKILL.md 文件采用 YAML 前置元数据 + Markdown 内容的格式:

---

name: "skill-name"

description: "Skill description for matching and discovery"

version: "1.0.0"

author: "Developer Name"

tags: ["category1", "category2"]

dependencies:

required: []

optional: []

alwaysApply: false

enabled: true

---

# Skill Title

## Overview

Brief description of what this skill does...

## Usage

Instructions on how to use this skill...

## Examples

Concrete examples of skill usage...

YAML 前置元数据规范

必需字段

---

name: "data-processor" # 技能唯一标识符

description: "Process and transform data using various algorithms and formats" # 技能描述

---

字段说明:

| 字段 | 类型 | 说明 | 示例 |

|---|---|---|---|

name |

string | 技能的唯一标识符,用于系统内部引用 | "image-processor" |

description |

string | 技能的功能描述,用于匹配和发现 | "Process images including resize, crop, and format conversion" |

重要提示:

-

name必须在系统内唯一,建议使用 kebab-case 格式 -

description应该准确描述技能的核心功能,避免过于宽泛或模糊 - 使用第三人称描述(如 “This skill processes…” 而不是 “Use this skill to…”)

可选字段

---

name: "advanced-data-processor"

description: "Advanced data processing with ML algorithms"

version: "2.1.0" # 版本号

author: "Data Team <data@company.com>" # 作者信息

tags: ["data", "ml", "processing"] # 分类标签

created: "2024-01-15" # 创建日期

updated: "2024-03-20" # 更新日期

license: "MIT" # 许可证

homepage: "https://github.com/org/skill" # 项目主页

dependencies: # 依赖关系

required:

- "file-handler:^1.0.0"

optional:

- "cloud-storage:>=2.0.0"

system_requirements: # 系统要求

python: ">=3.8"

memory: ">=4GB"

tools: ["pandas", "numpy"]

alwaysApply: false # 是否总是应用

enabled: true # 是否启用

priority: 100 # 优先级(数字越大优先级越高)

---

字段详细说明:

| 字段 | 类型 | 默认值 | 说明 |

|---|---|---|---|

version |

string | “1.0.0” | 遵循语义化版本规范 |

author |

string | – | 作者姓名和联系方式 |

tags |

array | [] | 用于分类和搜索的标签 |

created |

string | – | 创建日期 (YYYY-MM-DD) |

updated |

string | – | 最后更新日期 |

license |

string | – | 许可证类型 |

homepage |

string | – | 项目主页或文档地址 |

dependencies |

object | {} | 依赖关系定义 |

system_requirements |

object | {} | 系统环境要求 |

alwaysApply |

boolean | false | 是否在所有场景下都加载此技能 |

enabled |

boolean | true | 技能是否启用 |

priority |

number | 0 | 技能优先级,用于冲突解决 |

依赖关系配置

dependencies:

required: # 必需依赖

- "file-processor:^2.0.0" # 语义化版本约束

- "image-handler:>=1.5.0,<2.0.0" # 版本范围

optional: # 可选依赖

- "cloud-storage:*" # 任意版本

- "notification-service:~1.2.0" # 兼容版本

版本约束语法:

| 约束 | 含义 | 示例 |

|---|---|---|

^1.2.3 |

兼容版本 | >=1.2.3 <2.0.0 |

~1.2.3 |

近似版本 | >=1.2.3 <1.3.0 |

>=1.2.0 |

最小版本 | >=1.2.0 |

1.2.0 |

精确版本 | =1.2.0 |

* |

任意版本 | 任何可用版本 |

Markdown 内容结构

推荐章节结构

# Skill Title

## Overview

简要概述技能的功能和用途

## Prerequisites

使用此技能的前提条件

## Core Capabilities

技能的核心功能列表

## Usage Patterns

常见的使用模式和场景

## Configuration

配置参数和选项说明

## Examples

具体的使用示例

## Troubleshooting

常见问题和解决方案

## Related Skills

相关技能的引用

内容编写规范

1. 使用祈使语气

✅ 正确:

## Usage

To process an image, follow these steps:

1. Validate the input format

2. Apply the transformation

3. Save the result

❌ 错误:

## Usage

You should process images by validating the input format first...

2. 提供具体示例

✅ 正确:

## Examples

### Resize Image

```python

resize_image("input.jpg", width=800, height=600, output="resized.jpg")

```

❌ 错误:

## Examples

Use the resize function to change image dimensions.

3. 结构化信息组织

✅ 正确:

## Configuration Parameters

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `quality` | number | 85 | JPEG compression quality (1-100) |

| `format` | string | "jpeg" | Output format (jpeg, png, webp) |

❌ 错误:

## Configuration

You can set quality and format parameters...

资源文件组织

Scripts 目录

用于存放可执行脚本,这些脚本可以被 AI Agent 直接调用执行:

脚本类型和用途

| 脚本类型 | 文件扩展名 | 用途 | 示例 |

|---|---|---|---|

| Python 脚本 | .py |

数据处理、API 调用 | process_data.py |

| Shell 脚本 | .sh |

系统操作、部署 | deploy.sh |

| JavaScript | .js |

前端处理、Node.js | validate.js |

| PowerShell | .ps1 |

Windows 系统管理 | setup.ps1 |

脚本编写规范

#!/usr/bin/env python3

"""

Image processing utility script.

This script provides functions for basic image operations including

resize, crop, and format conversion.

Usage:

python process_image.py --input image.jpg --output result.png --resize 800x600

"""

import argparse

import sys

from pathlib import Path

def main():

"""Main entry point for the script."""

parser = argparse.ArgumentParser(description='Process images')

parser.add_argument('--input', required=True, help='Input image path')

parser.add_argument('--output', required=True, help='Output image path')

parser.add_argument('--resize', help='Resize dimensions (WIDTHxHEIGHT)')

args = parser.parse_args()

try:

# 处理逻辑

process_image(args.input, args.output, args.resize)

print(f"Successfully processed {args.input} -> {args.output}")

except Exception as e:

print(f"Error: {e}", file=sys.stderr)

sys.exit(1)

if __name__ == "__main__":

main()

References 目录

用于存放详细的参考文档,这些文档会在需要时加载到 AI Agent 的上下文中:

文档类型

| 文档类型 | 用途 | 示例文件名 |

|---|---|---|

| API 文档 | 接口说明和调用方法 | api-reference.md |

| 数据模式 | 数据结构定义 | schemas.json |

| 配置说明 | 详细的配置参数 | configuration.md |

| 故障排除 | 问题诊断和解决 | troubleshooting.md |

| 最佳实践 | 使用建议和优化 | best-practices.md |

文档编写示例

# API Reference

## Authentication

All API calls require authentication using API keys:

```http

GET /api/v1/data

Authorization: Bearer YOUR_API_KEY

Content-Type: application/json

```

## Endpoints

### GET /api/v1/data

Retrieve data records with optional filtering.

**Parameters:**

- `limit` (integer, optional): Maximum number of records (default: 100)

- `offset` (integer, optional): Number of records to skip (default: 0)

- `filter` (string, optional): Filter expression

**Response:**

```json

{

"data": [...],

"total": 1234,

"has_more": true

}

```

**Error Codes:**

- `400`: Bad Request - Invalid parameters

- `401`: Unauthorized - Invalid API key

- `429`: Rate Limit Exceeded

Assets 目录

用于存放模板文件、配置文件和其他资源,这些文件会被用于生成输出:

资源类型

| 资源类型 | 用途 | 示例 |

|---|---|---|

| 模板文件 | 代码生成模板 | templates/component.tsx |

| 配置模板 | 配置文件模板 | configs/nginx.conf.template |

| 样式文件 | CSS/样式资源 | styles/theme.css |

| 图标资源 | 图标和图片 | icons/logo.svg |

模板文件示例

// templates/react-component.tsx

import React from 'react';

interface {{ComponentName}}Props {

{{#each props}}

{{name}}: {{type}};

{{/each}}

}

export const {{ComponentName}}: React.FC<{{ComponentName}}Props> = ({

{{#each props}}{{name}}{{#unless @last}}, {{/unless}}{{/each}}

}) => {

return (

<div className="{{kebabCase componentName}}">

{{content}}

</div>

);

};

export default {{ComponentName}};

配置验证与最佳实践

自动验证规则

Skills 系统会自动验证以下内容:

1. 元数据验证

def validate_metadata(metadata):

"""验证技能元数据"""

required_fields = ['name', 'description']

for field in required_fields:

if field not in metadata:

raise ValidationError(f"Missing required field: {field}")

# 验证名称格式

if not re.match(r'^[a-z0-9-]+$', metadata['name']):

raise ValidationError("Name must use kebab-case format")

# 验证版本格式

if 'version' in metadata:

if not re.match(r'^d+.d+.d+$', metadata['version']):

raise ValidationError("Version must follow semantic versioning")

2. 依赖关系验证

def validate_dependencies(dependencies):

"""验证依赖关系"""

for dep in dependencies.get('required', []):

if not skill_exists(dep):

raise ValidationError(f"Required dependency not found: {dep}")

# 检查循环依赖

if has_circular_dependency(dependencies):

raise ValidationError("Circular dependency detected")

3. 文件结构验证

def validate_file_structure(skill_path):

"""验证文件结构"""

skill_file = skill_path / "SKILL.md"

if not skill_file.exists():

raise ValidationError("SKILL.md file is required")

# 验证可选目录

for directory in ['scripts', 'references', 'assets']:

dir_path = skill_path / directory

if dir_path.exists() and not dir_path.is_dir():

raise ValidationError(f"{directory} must be a directory")

开发最佳实践

1. 技能设计原则

- 单一职责:每个技能专注于一个特定领域或任务

- 自包含:技能应该包含执行任务所需的所有信息

- 可测试:提供清晰的输入输出示例和测试用例

- 文档完整:详细的使用说明和故障排除指南

2. 性能优化建议

- 精简元数据:保持 description 简洁但准确

- 分层内容:将详细信息放在 references 中而不是主文件

- 资源压缩:对大型资源文件进行压缩

- 缓存友好:避免频繁修改稳定的配置内容

3. 维护性考虑

- 版本管理:严格遵循语义化版本控制

- 向后兼容:在更新时保持 API 的向后兼容性

- 变更日志:记录每个版本的变更内容

- 测试覆盖:为关键功能提供测试用例

通过遵循这些格式规范和最佳实践,开发者可以创建高质量、易维护的 Skills,为 AI Agent 提供强大而可靠的能力扩展。

实例

理论知识需要通过实际案例来加深理解。本章通过四个不同领域的完整 Skills 实例,展示如何设计和实现高质量的技能模块,涵盖图片处理、数据分析、Web 开发和系统运维等常见应用场景。

实例一:图片处理技能 (image-processor)

功能概述

图片处理技能提供完整的图像操作能力,包括格式转换、尺寸调整、质量优化和批量处理等功能。

技能配置

---

name: "image-processor"

description: "Comprehensive image processing including resize, crop, format conversion, and batch operations"

version: "1.2.0"

author: "Media Team <media@company.com>"

tags: ["image", "processing", "media", "conversion"]

dependencies:

required: []

optional:

- "cloud-storage:>=2.0.0"

system_requirements:

python: ">=3.8"

tools: ["pillow", "imagemagick"]

alwaysApply: false

enabled: true

priority: 80

---

核心功能实现

# Image Processing Skill

## Overview

This skill provides comprehensive image processing capabilities for AI agents, enabling automatic image manipulation, optimization, and batch processing operations.

## Core Capabilities

### Format Conversion

- JPEG ↔ PNG ↔ WebP ↔ TIFF

- Automatic format detection

- Quality preservation options

### Size Operations

- Intelligent resizing with aspect ratio preservation

- Smart cropping with focus detection

- Thumbnail generation with multiple sizes

### Quality Optimization

- Lossless compression

- Progressive JPEG encoding

- WebP optimization for web delivery

### Batch Processing

- Directory-based batch operations

- Parallel processing for large datasets

- Progress tracking and error handling

## Usage Patterns

### Single Image Processing

When processing individual images:

1. Validate input format and accessibility

2. Determine required operations based on user intent

3. Apply transformations in optimal sequence

4. Validate output quality and file size

### Batch Operations

For multiple images:

1. Scan source directory for supported formats

2. Create processing queue with priority ordering

3. Execute operations with parallel processing

4. Generate summary report with statistics

## Configuration Parameters

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `max_width` | integer | 1920 | Maximum output width in pixels |

| `max_height` | integer | 1080 | Maximum output height in pixels |

| `quality` | integer | 85 | JPEG/WebP quality (1-100) |

| `preserve_metadata` | boolean | false | Keep EXIF data in output |

| `progressive` | boolean | true | Use progressive encoding |

| `optimize` | boolean | true | Enable optimization algorithms |

## Error Handling

### Common Issues

- **Unsupported Format**: Provide format conversion suggestions

- **File Too Large**: Offer compression or resizing options

- **Corrupted Image**: Attempt repair or request replacement

- **Insufficient Memory**: Use streaming processing for large files

### Recovery Strategies

- Automatic fallback to alternative processing methods

- Graceful degradation with quality trade-offs

- Detailed error reporting with suggested solutions

脚本实现

# scripts/process_image.py

#!/usr/bin/env python3

"""

Advanced image processing script with comprehensive format support.

"""

import argparse

import sys

from pathlib import Path

from PIL import Image, ImageOps

import logging

# 配置日志

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

class ImageProcessor:

"""高级图像处理器"""

SUPPORTED_FORMATS = {'.jpg', '.jpeg', '.png', '.webp', '.tiff', '.bmp'}

def __init__(self, max_width=1920, max_height=1080, quality=85):

self.max_width = max_width

self.max_height = max_height

self.quality = quality

def process_image(self, input_path, output_path, operations=None):

"""处理单个图像"""

try:

with Image.open(input_path) as img:

# 自动旋转(基于EXIF)

img = ImageOps.exif_transpose(img)

# 执行操作

if operations:

for operation in operations:

img = self._apply_operation(img, operation)

# 保存结果

save_kwargs = {'quality': self.quality, 'optimize': True}

if output_path.suffix.lower() == '.jpg':

save_kwargs['progressive'] = True

img.save(output_path, **save_kwargs)

logger.info(f"Successfully processed: {input_path} -> {output_path}")

except Exception as e:

logger.error(f"Error processing {input_path}: {e}")

raise

def _apply_operation(self, img, operation):

"""应用图像操作"""

op_type = operation.get('type')

if op_type == 'resize':

return self._resize_image(img, operation)

elif op_type == 'crop':

return self._crop_image(img, operation)

elif op_type == 'rotate':

return img.rotate(operation.get('angle', 0), expand=True)

else:

logger.warning(f"Unknown operation: {op_type}")

return img

def _resize_image(self, img, operation):

"""智能调整图像大小"""

target_width = operation.get('width', self.max_width)

target_height = operation.get('height', self.max_height)

preserve_aspect = operation.get('preserve_aspect', True)

if preserve_aspect:

img.thumbnail((target_width, target_height), Image.Resampling.LANCZOS)

else:

img = img.resize((target_width, target_height), Image.Resampling.LANCZOS)

return img

def batch_process(self, input_dir, output_dir, operations=None):

"""批量处理图像"""

input_path = Path(input_dir)

output_path = Path(output_dir)

output_path.mkdir(parents=True, exist_ok=True)

# 查找支持的图像文件

image_files = []

for ext in self.SUPPORTED_FORMATS:

image_files.extend(input_path.glob(f"*{ext}"))

image_files.extend(input_path.glob(f"*{ext.upper()}"))

logger.info(f"Found {len(image_files)} images to process")

# 处理每个文件

success_count = 0

for img_file in image_files:

try:

output_file = output_path / img_file.name

self.process_image(img_file, output_file, operations)

success_count += 1

except Exception as e:

logger.error(f"Failed to process {img_file}: {e}")

logger.info(f"Successfully processed {success_count}/{len(image_files)} images")

def main():

parser = argparse.ArgumentParser(description='Advanced Image Processor')

parser.add_argument('--input', required=True, help='Input file or directory')

parser.add_argument('--output', required=True, help='Output file or directory')

parser.add_argument('--resize', help='Resize to WIDTHxHEIGHT')

parser.add_argument('--quality', type=int, default=85, help='Output quality (1-100)')

parser.add_argument('--batch', action='store_true', help='Batch process directory')

args = parser.parse_args()

# 创建处理器

processor = ImageProcessor(quality=args.quality)

# 准备操作列表

operations = []

if args.resize:

width, height = map(int, args.resize.split('x'))

operations.append({

'type': 'resize',

'width': width,

'height': height,

'preserve_aspect': True

})

try:

if args.batch:

processor.batch_process(args.input, args.output, operations)

else:

processor.process_image(Path(args.input), Path(args.output), operations)

except Exception as e:

logger.error(f"Processing failed: {e}")

sys.exit(1)

if __name__ == "__main__":

main()

实例二:数据分析技能 (data-analyzer)

功能概述

数据分析技能提供全面的数据处理和分析能力,支持多种数据格式,包含统计分析、可视化和报告生成功能。

技能配置

---

name: "data-analyzer"

description: "Comprehensive data analysis including statistics, visualization, and automated reporting"

version: "2.0.1"

author: "Analytics Team <analytics@company.com>"

tags: ["data", "analysis", "statistics", "visualization"]

dependencies:

required:

- "file-processor:^1.0.0"

optional:

- "database-connector:>=2.1.0"

- "cloud-storage:*"

system_requirements:

python: ">=3.9"

memory: ">=8GB"

tools: ["pandas", "numpy", "matplotlib", "seaborn"]

alwaysApply: false

enabled: true

priority: 90

---

核心分析能力

# Data Analysis Skill

## Overview

Advanced data analysis capabilities for AI agents, providing statistical analysis, data visualization, and automated report generation from various data sources.

## Core Capabilities

### Data Import & Processing

- Multi-format support: CSV, Excel, JSON, Parquet, SQL databases

- Automatic data type detection and conversion

- Missing value handling with multiple strategies

- Data validation and quality assessment

### Statistical Analysis

- Descriptive statistics with distribution analysis

- Correlation analysis and feature relationships

- Hypothesis testing and significance analysis

- Time series analysis and forecasting

### Data Visualization

- Automatic chart type selection based on data characteristics

- Interactive dashboards with drill-down capabilities

- Statistical plots: histograms, box plots, scatter matrices

- Time series visualizations with trend analysis

### Report Generation

- Automated insights discovery and narrative generation

- Executive summary with key findings

- Statistical appendix with detailed analysis

- Export to multiple formats: PDF, HTML, PowerPoint

## Usage Patterns

### Exploratory Data Analysis

For initial data exploration:

1. Load and validate data integrity

2. Generate descriptive statistics summary

3. Identify patterns, outliers, and anomalies

4. Create visualization suite for key relationships

5. Produce preliminary insights report

### Comparative Analysis

For comparing datasets or time periods:

1. Align data structures and time ranges

2. Calculate comparative metrics and ratios

3. Perform statistical significance tests

4. Generate side-by-side visualizations

5. Summarize key differences and trends

### Predictive Analysis

For forecasting and trend analysis:

1. Prepare time series data with proper indexing

2. Apply appropriate forecasting models

3. Validate model performance with cross-validation

4. Generate confidence intervals and scenarios

5. Create forecast visualizations with uncertainty bands

## Configuration Parameters

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `confidence_level` | float | 0.95 | Statistical confidence level |

| `missing_threshold` | float | 0.1 | Maximum missing data ratio |

| `outlier_method` | string | "iqr" | Outlier detection method |

| `chart_style` | string | "seaborn" | Visualization style theme |

| `auto_insights` | boolean | true | Enable automatic insights |

| `export_format` | string | "pdf" | Default report format |

分析脚本实现

# scripts/analyze_data.py

#!/usr/bin/env python3

"""

Comprehensive data analysis script with automated insights.

"""

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from pathlib import Path

import argparse

import json

from datetime import datetime

class DataAnalyzer:

"""高级数据分析器"""

def __init__(self, confidence_level=0.95, missing_threshold=0.1):

self.confidence_level = confidence_level

self.missing_threshold = missing_threshold

self.insights = []

# 设置可视化样式

plt.style.use('seaborn-v0_8')

sns.set_palette("husl")

def load_data(self, file_path):

"""智能加载数据文件"""

path = Path(file_path)

if path.suffix.lower() == '.csv':

return pd.read_csv(path)

elif path.suffix.lower() in ['.xlsx', '.xls']:

return pd.read_excel(path)

elif path.suffix.lower() == '.json':

return pd.read_json(path)

elif path.suffix.lower() == '.parquet':

return pd.read_parquet(path)

else:

raise ValueError(f"Unsupported file format: {path.suffix}")

def analyze_data_quality(self, df):

"""分析数据质量"""

quality_report = {

'total_rows': len(df),

'total_columns': len(df.columns),

'missing_data': {},

'data_types': {},

'duplicates': df.duplicated().sum()

}

# 分析缺失值

for col in df.columns:

missing_count = df[col].isnull().sum()

missing_ratio = missing_count / len(df)

quality_report['missing_data'][col] = {

'count': missing_count,

'ratio': missing_ratio

}

if missing_ratio > self.missing_threshold:

self.insights.append(f"Column '{col}' has {missing_ratio:.1%} missing values")

# 分析数据类型

for col in df.columns:

quality_report['data_types'][col] = str(df[col].dtype)

return quality_report

def descriptive_analysis(self, df):

"""描述性统计分析"""

numeric_cols = df.select_dtypes(include=[np.number]).columns

categorical_cols = df.select_dtypes(include=['object', 'category']).columns

analysis = {

'numeric_summary': {},

'categorical_summary': {}

}

# 数值型变量分析

if len(numeric_cols) > 0:

analysis['numeric_summary'] = df[numeric_cols].describe().to_dict()

# 检测异常值

for col in numeric_cols:

Q1 = df[col].quantile(0.25)

Q3 = df[col].quantile(0.75)

IQR = Q3 - Q1

outliers = df[(df[col] < Q1 - 1.5*IQR) | (df[col] > Q3 + 1.5*IQR)]

if len(outliers) > 0:

outlier_ratio = len(outliers) / len(df)

self.insights.append(f"Column '{col}' has {outlier_ratio:.1%} outliers")

# 分类变量分析

if len(categorical_cols) > 0:

for col in categorical_cols:

value_counts = df[col].value_counts()

analysis['categorical_summary'][col] = {

'unique_values': df[col].nunique(),

'most_frequent': value_counts.index[0] if len(value_counts) > 0 else None,

'frequency': value_counts.iloc[0] if len(value_counts) > 0 else 0

}

return analysis

def correlation_analysis(self, df):

"""相关性分析"""

numeric_cols = df.select_dtypes(include=[np.number]).columns

if len(numeric_cols) < 2:

return None

correlation_matrix = df[numeric_cols].corr()

# 找出强相关关系

strong_correlations = []

for i in range(len(correlation_matrix.columns)):

for j in range(i+1, len(correlation_matrix.columns)):

corr_value = correlation_matrix.iloc[i, j]

if abs(corr_value) > 0.7: # 强相关阈值

strong_correlations.append({

'var1': correlation_matrix.columns[i],

'var2': correlation_matrix.columns[j],

'correlation': corr_value

})

if strong_correlations:

for corr in strong_correlations:

self.insights.append(

f"Strong correlation ({corr['correlation']:.3f}) between "

f"'{corr['var1']}' and '{corr['var2']}'"

)

return correlation_matrix

def create_visualizations(self, df, output_dir):

"""创建可视化图表"""

output_path = Path(output_dir)

output_path.mkdir(parents=True, exist_ok=True)

numeric_cols = df.select_dtypes(include=[np.number]).columns

categorical_cols = df.select_dtypes(include=['object', 'category']).columns

# 数值变量分布图

if len(numeric_cols) > 0:

fig, axes = plt.subplots(2, 2, figsize=(15, 12))

fig.suptitle('Numeric Variables Distribution', fontsize=16)

for i, col in enumerate(numeric_cols[:4]):

row, col_idx = divmod(i, 2)

# 直方图

axes[row, col_idx].hist(df[col].dropna(), bins=30, alpha=0.7)

axes[row, col_idx].set_title(f'Distribution of {col}')

axes[row, col_idx].set_xlabel(col)

axes[row, col_idx].set_ylabel('Frequency')

plt.tight_layout()

plt.savefig(output_path / 'numeric_distributions.png', dpi=300, bbox_inches='tight')

plt.close()

# 相关性热力图

if len(numeric_cols) > 1:

plt.figure(figsize=(12, 10))

correlation_matrix = df[numeric_cols].corr()

sns.heatmap(correlation_matrix, annot=True, cmap='coolwarm', center=0,

square=True, linewidths=0.5)

plt.title('Correlation Matrix Heatmap')

plt.tight_layout()

plt.savefig(output_path / 'correlation_heatmap.png', dpi=300, bbox_inches='tight')

plt.close()

# 分类变量条形图

if len(categorical_cols) > 0:

fig, axes = plt.subplots(2, 2, figsize=(15, 12))

fig.suptitle('Categorical Variables Distribution', fontsize=16)

for i, col in enumerate(categorical_cols[:4]):

if i >= 4:

break

row, col_idx = divmod(i, 2)

value_counts = df[col].value_counts().head(10)

axes[row, col_idx].bar(range(len(value_counts)), value_counts.values)

axes[row, col_idx].set_title(f'Distribution of {col}')

axes[row, col_idx].set_xlabel(col)

axes[row, col_idx].set_ylabel('Count')

axes[row, col_idx].set_xticks(range(len(value_counts)))

axes[row, col_idx].set_xticklabels(value_counts.index, rotation=45)

plt.tight_layout()

plt.savefig(output_path / 'categorical_distributions.png', dpi=300, bbox_inches='tight')

plt.close()

def generate_report(self, df, output_path):

"""生成分析报告"""

quality_report = self.analyze_data_quality(df)

descriptive_stats = self.descriptive_analysis(df)

correlation_matrix = self.correlation_analysis(df)

report = {

'analysis_timestamp': datetime.now().isoformat(),

'data_quality': quality_report,

'descriptive_statistics': descriptive_stats,

'insights': self.insights,

'recommendations': self._generate_recommendations(df)

}

# 保存JSON报告

with open(output_path, 'w', encoding='utf-8') as f:

json.dump(report, f, indent=2, ensure_ascii=False, default=str)

return report

def _generate_recommendations(self, df):

"""生成数据处理建议"""

recommendations = []

# 基于数据质量的建议

missing_cols = [col for col in df.columns

if df[col].isnull().sum() / len(df) > self.missing_threshold]

if missing_cols:

recommendations.append(f"Consider handling missing values in: {', '.join(missing_cols)}")

# 基于数据分布的建议

numeric_cols = df.select_dtypes(include=[np.number]).columns

for col in numeric_cols:

skewness = df[col].skew()

if abs(skewness) > 2:

recommendations.append(f"Column '{col}' is highly skewed, consider transformation")

return recommendations

def main():

parser = argparse.ArgumentParser(description='Comprehensive Data Analyzer')

parser.add_argument('--input', required=True, help='Input data file')

parser.add_argument('--output', required=True, help='Output directory')

parser.add_argument('--confidence', type=float, default=0.95, help='Confidence level')

args = parser.parse_args()

# 创建分析器

analyzer = DataAnalyzer(confidence_level=args.confidence)

try:

# 加载数据

df = analyzer.load_data(args.input)

print(f"Loaded data: {len(df)} rows, {len(df.columns)} columns")

# 创建输出目录

output_dir = Path(args.output)

output_dir.mkdir(parents=True, exist_ok=True)

# 生成可视化

analyzer.create_visualizations(df, output_dir / 'charts')

# 生成报告

report = analyzer.generate_report(df, output_dir / 'analysis_report.json')

print(f"Analysis complete. Results saved to: {output_dir}")

print(f"Generated {len(analyzer.insights)} insights")

except Exception as e:

print(f"Analysis failed: {e}")

sys.exit(1)

if __name__ == "__main__":

main()

实例三:Web 开发技能 (web-builder)

功能概述

Web 开发技能提供现代 Web 应用的快速构建能力,包含前端框架集成、后端 API 开发和部署自动化。

技能配置

---

name: "web-builder"

description: "Modern web application development with React, Node.js, and automated deployment"

version: "3.1.2"

author: "Frontend Team <frontend@company.com>"

tags: ["web", "react", "nodejs", "deployment", "fullstack"]

dependencies:

required:

- "file-processor:^1.0.0"

optional:

- "database-connector:>=2.0.0"

- "cloud-deployment:>=1.5.0"

system_requirements:

node: ">=16.0.0"

npm: ">=8.0.0"

memory: ">=4GB"

alwaysApply: false

enabled: true

priority: 85

---

开发能力实现

# Web Development Skill

## Overview

Comprehensive web development capabilities for building modern, responsive web applications using React, Node.js, and automated deployment pipelines.

## Core Capabilities

### Frontend Development

- React component generation with TypeScript support

- Responsive design with CSS-in-JS or Tailwind CSS

- State management with Redux Toolkit or Zustand

- Routing with React Router and protected routes

- Form handling with validation and error management

### Backend Development

- RESTful API development with Express.js

- GraphQL API with Apollo Server

- Authentication and authorization (JWT, OAuth)

- Database integration (MongoDB, PostgreSQL, MySQL)

- File upload and processing capabilities

### Development Tools

- Hot reload development server

- Code linting and formatting (ESLint, Prettier)

- Testing setup (Jest, React Testing Library)

- Build optimization and bundling

- Environment configuration management

### Deployment & DevOps

- Docker containerization

- CI/CD pipeline configuration

- Cloud platform deployment (Vercel, Netlify, AWS)

- Performance monitoring and analytics

- Error tracking and logging

## Usage Patterns

### Single Page Application (SPA)

For building interactive web applications:

1. Initialize React project with TypeScript template

2. Set up routing structure and navigation

3. Create reusable component library

4. Implement state management and API integration

5. Add authentication and user management

6. Configure build and deployment pipeline

### Full-Stack Application

For complete web solutions:

1. Set up monorepo structure with frontend and backend

2. Design database schema and API endpoints

3. Implement backend services with proper validation

4. Create frontend components consuming APIs

5. Add comprehensive testing coverage

6. Configure production deployment with monitoring

### Static Site Generation

For content-focused websites:

1. Set up Next.js or Gatsby project

2. Configure content management system integration

3. Create dynamic page generation from content

4. Optimize for SEO and performance

5. Set up automated content deployment

6. Add analytics and performance monitoring

## Project Templates

### React TypeScript SPA Template

```json

{

"name": "react-spa-template",

"structure": {

"src/": {

"components/": "Reusable UI components",

"pages/": "Route-based page components",

"hooks/": "Custom React hooks",

"services/": "API service functions",

"store/": "State management",

"types/": "TypeScript type definitions",

"utils/": "Utility functions"

},

"public/": "Static assets",

"tests/": "Test files"

}

}

Node.js API Template

{

"name": "nodejs-api-template",

"structure": {

"src/": {

"controllers/": "Request handlers",

"models/": "Data models",

"routes/": "API route definitions",

"middleware/": "Custom middleware",

"services/": "Business logic",

"config/": "Configuration files",

"utils/": "Utility functions"

},

"tests/": "API tests",

"docs/": "API documentation"

}

}

实例四:系统运维技能 (system-admin)

功能概述

系统运维技能提供全面的服务器管理和自动化运维能力,包含监控、部署、备份和故障处理等功能。

技能配置

---

name: "system-admin"

description: "Comprehensive system administration including monitoring, deployment, backup, and troubleshooting"

version: "2.3.0"

author: "DevOps Team <devops@company.com>"

tags: ["devops", "monitoring", "deployment", "backup", "linux"]

dependencies:

required:

- "file-processor:^1.0.0"

optional:

- "notification-service:>=1.2.0"

- "cloud-storage:>=2.0.0"

system_requirements:

os: "linux"

shell: "bash"

tools: ["docker", "systemctl", "crontab"]

alwaysApply: false

enabled: true

priority: 95

---

运维脚本实现

#!/bin/bash

# scripts/system_monitor.sh

# 系统监控和健康检查脚本

set -euo pipefail

# 配置参数

ALERT_THRESHOLD_CPU=80

ALERT_THRESHOLD_MEMORY=85

ALERT_THRESHOLD_DISK=90

LOG_FILE="/var/log/system_monitor.log"

REPORT_FILE="/tmp/system_report.json"

# 日志函数

log() {

echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a "$LOG_FILE"

}

# 检查CPU使用率

check_cpu_usage() {

local cpu_usage

cpu_usage=$(top -bn1 | grep "Cpu(s)" | awk '{print $2}' | sed 's/%us,//')

cpu_usage=${cpu_usage%.*} # 去除小数部分

echo ""cpu_usage": $cpu_usage,"

if [ "$cpu_usage" -gt "$ALERT_THRESHOLD_CPU" ]; then

log "WARNING: High CPU usage detected: ${cpu_usage}%"

return 1

fi

return 0

}

# 检查内存使用率

check_memory_usage() {

local memory_info

memory_info=$(free | grep Mem)

local total=$(echo $memory_info | awk '{print $2}')

local used=$(echo $memory_info | awk '{print $3}')

local memory_usage=$((used * 100 / total))

echo ""memory_usage": $memory_usage,"

echo ""memory_total_gb": $((total / 1024 / 1024)),"

echo ""memory_used_gb": $((used / 1024 / 1024)),"

if [ "$memory_usage" -gt "$ALERT_THRESHOLD_MEMORY" ]; then

log "WARNING: High memory usage detected: ${memory_usage}%"

return 1

fi

return 0

}

# 检查磁盘使用率

check_disk_usage() {

local disk_usage

disk_usage=$(df -h / | awk 'NR==2 {print $5}' | sed 's/%//')

echo ""disk_usage": $disk_usage,"

if [ "$disk_usage" -gt "$ALERT_THRESHOLD_DISK" ]; then

log "WARNING: High disk usage detected: ${disk_usage}%"

return 1

fi

return 0

}

# 检查系统服务状态

check_services() {

local services=("nginx" "mysql" "redis" "docker")

local service_status=""services": {"

for service in "${services[@]}"; do

if systemctl is-active --quiet "$service"; then

service_status+="n "$service": "active","

else

service_status+="n "$service": "inactive","

log "WARNING: Service $service is not running"

fi

done

service_status=${service_status%,} # 移除最后的逗号

service_status+="n },"

echo -e "$service_status"

}

# 检查网络连接

check_network() {

local ping_result

if ping -c 1 8.8.8.8 >/dev/null 2>&1; then

ping_result="true"

else

ping_result="false"

log "WARNING: Network connectivity issue detected"

fi

echo ""network_connectivity": $ping_result,"

}

# 生成系统报告

generate_report() {

local timestamp=$(date -u +"%Y-%m-%dT%H:%M:%SZ")

cat > "$REPORT_FILE" << EOF

{

"timestamp": "$timestamp",

"hostname": "$(hostname)",

"uptime": "$(uptime -p)",

$(check_cpu_usage)

$(check_memory_usage)

$(check_disk_usage)

$(check_network)

$(check_services)

"load_average": "$(uptime | awk -F'load average:' '{print $2}' | xargs)"

}

EOF

log "System report generated: $REPORT_FILE"

}

# 主函数

main() {

log "Starting system health check..."

local exit_code=0

# 执行所有检查

check_cpu_usage || exit_code=1

check_memory_usage || exit_code=1

check_disk_usage || exit_code=1

check_network || exit_code=1

check_services || exit_code=1

# 生成报告

generate_report

if [ $exit_code -eq 0 ]; then

log "System health check completed successfully"

else

log "System health check completed with warnings"

fi

exit $exit_code

}

# 脚本入口

if [[ "${BASH_SOURCE[0]}" == "${0}" ]]; then

main "$@"

fi

部署自动化脚本

#!/bin/bash

# scripts/deploy_application.sh

# 应用部署自动化脚本

set -euo pipefail

# 配置参数

APP_NAME="${APP_NAME:-myapp}"

DEPLOY_ENV="${DEPLOY_ENV:-production}"

DOCKER_REGISTRY="${DOCKER_REGISTRY:-registry.company.com}"

BACKUP_DIR="/opt/backups"

LOG_FILE="/var/log/deploy_${APP_NAME}.log"

# 颜色输出

RED='33[0;31m'

GREEN='33[0;32m'

YELLOW='33[1;33m'

NC='33[0m' # No Color

# 日志函数

log() {

echo -e "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a "$LOG_FILE"

}

error() {

log "${RED}ERROR: $1${NC}"

}

success() {

log "${GREEN}SUCCESS: $1${NC}"

}

warning() {

log "${YELLOW}WARNING: $1${NC}"

}

# 预检查函数

pre_deployment_checks() {

log "Running pre-deployment checks..."

# 检查Docker是否运行

if ! docker info >/dev/null 2>&1; then

error "Docker is not running"

exit 1

fi

# 检查磁盘空间

local disk_usage=$(df / | awk 'NR==2 {print $5}' | sed 's/%//')

if [ "$disk_usage" -gt 85 ]; then

error "Insufficient disk space: ${disk_usage}% used"

exit 1

fi

# 检查网络连接

if ! curl -s --connect-timeout 5 "$DOCKER_REGISTRY" >/dev/null; then

error "Cannot connect to Docker registry: $DOCKER_REGISTRY"

exit 1

fi

success "Pre-deployment checks passed"

}

# 备份当前版本

backup_current_version() {

log "Creating backup of current version..."

local backup_name="${APP_NAME}_$(date +%Y%m%d_%H%M%S)"

local backup_path="$BACKUP_DIR/$backup_name"

mkdir -p "$backup_path"

# 备份配置文件

if [ -d "/opt/$APP_NAME/config" ]; then

cp -r "/opt/$APP_NAME/config" "$backup_path/"

fi

# 备份数据库(如果存在)

if command -v mysqldump >/dev/null 2>&1; then

mysqldump --single-transaction "$APP_NAME" > "$backup_path/database.sql" 2>/dev/null || true

fi

# 记录当前运行的容器信息

docker ps --filter "name=$APP_NAME" --format "table {{.Names}}t{{.Image}}t{{.Status}}" > "$backup_path/containers.txt"

success "Backup created: $backup_path"

echo "$backup_path" > "/tmp/last_backup_path"

}

# 拉取新镜像

pull_new_image() {

local image_tag="${1:-latest}"

local full_image="$DOCKER_REGISTRY/$APP_NAME:$image_tag"

log "Pulling new image: $full_image"

if docker pull "$full_image"; then

success "Image pulled successfully"

echo "$full_image" > "/tmp/new_image_name"

else

error "Failed to pull image: $full_image"

exit 1

fi

}

# 停止旧容器

stop_old_containers() {

log "Stopping old containers..."

local containers=$(docker ps -q --filter "name=$APP_NAME")

if [ -n "$containers" ]; then

docker stop $containers

docker rm $containers

success "Old containers stopped and removed"

else

log "No running containers found for $APP_NAME"

fi

}

# 启动新容器

start_new_container() {

local image_name=$(cat "/tmp/new_image_name")

log "Starting new container with image: $image_name"

# 基本的容器启动命令(需要根据具体应用调整)

docker run -d

--name "${APP_NAME}_$(date +%s)"

--restart unless-stopped

-p 8080:8080

-v "/opt/$APP_NAME/config:/app/config:ro"

-v "/opt/$APP_NAME/data:/app/data"

--env-file "/opt/$APP_NAME/.env"

"$image_name"

success "New container started"

}

# 健康检查

health_check() {

log "Performing health check..."

local max_attempts=30

local attempt=1

while [ $attempt -le $max_attempts ]; do

if curl -s -f "http://localhost:8080/health" >/dev/null; then

success "Health check passed"

return 0

fi

log "Health check attempt $attempt/$max_attempts failed, retrying in 10 seconds..."

sleep 10

((attempt++))

done

error "Health check failed after $max_attempts attempts"

return 1

}

# 回滚函数

rollback() {

error "Deployment failed, initiating rollback..."

# 停止新容器

local new_containers=$(docker ps -q --filter "name=$APP_NAME")

if [ -n "$new_containers" ]; then

docker stop $new_containers

docker rm $new_containers

fi

# 恢复备份(这里简化处理,实际需要更复杂的逻辑)

warning "Manual rollback may be required"

exit 1

}

# 清理旧镜像

cleanup_old_images() {

log "Cleaning up old images..."

# 保留最近的3个版本

docker images "$DOCKER_REGISTRY/$APP_NAME" --format "{{.ID}}" | tail -n +4 | xargs -r docker rmi

# 清理悬空镜像

docker image prune -f

success "Image cleanup completed"

}

# 主部署流程

main() {

local image_tag="${1:-latest}"

log "Starting deployment of $APP_NAME:$image_tag to $DEPLOY_ENV environment"

# 设置错误处理

trap rollback ERR

# 执行部署步骤

pre_deployment_checks

backup_current_version

pull_new_image "$image_tag"

stop_old_containers

start_new_container

# 健康检查

if health_check; then

cleanup_old_images

success "Deployment completed successfully"

else

rollback

fi

# 清理临时文件

rm -f "/tmp/new_image_name" "/tmp/last_backup_path"

}

# 脚本入口

if [[ "${BASH_SOURCE[0]}" == "${0}" ]]; then

main "$@"

fi

通过这四个完整的实例,我们可以看到 Skills 系统在不同领域的应用方式。每个实例都展示了从配置定义到具体实现的完整过程,体现了 Skills 系统的灵活性和强大功能。这些实例可以作为开发新 Skills 的参考模板,帮助开发者快速上手并创建高质量的技能模块。

最佳实践

经过大量的实践和优化,我们总结出了一套完整的 Skills 开发最佳实践。本章将从设计原则、开发规范、性能优化、维护策略等多个维度,为开发者提供全面的指导建议。

设计原则

1. 单一职责原则 (Single Responsibility Principle)

每个 Skill 应该专注于一个特定的领域或任务,避免功能过于复杂或职责不清。

# ✅ 良好设计:专注于图像处理的技能

name: "image-processor"

description: "Image processing including resize, crop, format conversion, and optimization"

# ❌ 避免的设计:职责过于宽泛的技能

name: "media-handler"

description: "Handle images, videos, audio files, documents, and web scraping"

实施建议:

- 一个 Skill 解决一类问题

- 功能边界清晰,不与其他 Skills 重叠

- 如果发现功能过于复杂,考虑拆分为多个 Skills

2. 自包含原则 (Self-Contained Principle)

Skills 应该包含执行任务所需的所有信息,减少对外部依赖的需求。

核心要素:

- 完整的操作指南:详细的步骤说明

- 配置参数文档:所有参数的含义和用法

- 错误处理指南:常见问题的解决方案

- 示例和模板:可直接使用的代码示例

实施策略:

# 在 SKILL.md 中包含完整信息

## Prerequisites

- System requirements

- Required tools and libraries

- Environment setup instructions

## Configuration

- All parameters with default values

- Parameter validation rules

- Environment-specific configurations

## Error Handling

- Common error scenarios

- Troubleshooting steps

- Recovery procedures

3. 渐进式复杂度原则 (Progressive Complexity)

Skills 的内容组织应该从简单到复杂,支持不同层次的使用需求。

内容层次结构:

Level 1: 基础使用 (SKILL.md 主体)

├─ 快速开始指南

├─ 基本配置说明

└─ 常用操作示例

Level 2: 高级功能 (references/)

├─ 详细API文档

├─ 高级配置选项

└─ 复杂场景处理

Level 3: 专家级定制 (scripts/ + assets/)

├─ 自定义脚本

├─ 配置模板

└─ 扩展工具

开发规范

1. 命名规范

Skill 命名

- 使用 kebab-case 格式

- 名称应该描述性强且简洁

- 避免使用缩写和专业术语

✅ 推荐命名:

- "database-migrator"

- "api-documentation-generator"

- "performance-monitor"

❌ 避免的命名:

- "db_mig"

- "APIDocGen"

- "perf-mon-sys"

文件命名

技能目录/

├── SKILL.md # 固定名称,大写

├── scripts/

│ ├── process_data.py # snake_case

│ └── deploy_service.sh # snake_case

├── references/

│ ├── api-reference.md # kebab-case

│ └── troubleshooting.md # kebab-case

└── assets/

├── config-template.yaml # kebab-case

└── sample-data.json # kebab-case

2. 文档编写规范

元数据质量标准

---

name: "skill-name"

description: "Clear, specific description focusing on what the skill does and when to use it"

version: "1.0.0" # 语义化版本

author: "Team Name <email>" # 包含联系方式

tags: ["category", "technology"] # 3-5个相关标签

dependencies: # 明确的依赖关系

required: ["essential-skill:^1.0.0"]

optional: ["enhancement-skill:*"]

---

内容结构标准

# Skill Title

## Overview (必需)

简洁的功能概述,1-2段文字

## Prerequisites (推荐)

使用前提条件和环境要求

## Core Capabilities (必需)

核心功能列表,使用项目符号

## Usage Patterns (必需)

常见使用场景和工作流程

## Configuration (如适用)

配置参数说明,使用表格格式

## Examples (必需)

具体的使用示例,包含完整代码

## Troubleshooting (推荐)

常见问题和解决方案

## Related Skills (如适用)

相关技能的引用链接

代码示例标准

✅ 完整的代码示例:

```python

# 完整的可执行示例

from image_processor import ImageProcessor

processor = ImageProcessor(quality=85)

result = processor.resize_image(

input_path="input.jpg",

output_path="output.jpg",

width=800,

height=600

)

print(f"Processing completed: {result}")

```

❌ 不完整的示例:

```python

# 缺少导入和上下文

processor.resize_image(input_path, output_path)

```

3. 版本管理规范

语义化版本控制

版本格式: MAJOR.MINOR.PATCH

MAJOR: 不兼容的API变更

- 改变核心接口

- 删除功能

- 修改配置格式

MINOR: 向后兼容的功能新增

- 添加新功能

- 增强现有功能

- 新增配置选项

PATCH: 向后兼容的问题修正

- 修复错误

- 改进文档

- 性能优化

变更日志维护

# CHANGELOG.md

## [2.1.0] - 2024-03-15

### Added

- New batch processing capability

- Support for WebP format conversion

- Automatic quality optimization

### Changed

- Improved error handling for large files

- Updated dependency requirements

### Fixed

- Memory leak in batch processing

- Incorrect aspect ratio calculation

## [2.0.1] - 2024-02-28

### Fixed

- Critical security vulnerability in file upload

- Performance issue with large image processing

性能优化

1. 上下文优化策略

元数据精简

# 优化前 - 描述过于详细

description: "This comprehensive skill provides advanced image processing capabilities including but not limited to resizing, cropping, format conversion, quality optimization, batch processing, metadata handling, and integration with cloud storage services for modern web applications and mobile platforms"

# 优化后 - 简洁明确

description: "Image processing including resize, crop, format conversion, and batch operations"

内容分层

# SKILL.md - 保持核心内容简洁

## Quick Start

Basic usage patterns and common operations

## Configuration

Essential parameters only

# references/advanced-guide.md - 详细内容分离

## Advanced Configuration

Detailed parameter explanations and edge cases

## Performance Tuning

Optimization strategies and benchmarks

2. 资源管理优化

脚本优化

# 优化前 - 每次重新计算

def process_image(image_path):

config = load_config() # 每次都加载配置

processor = ImageProcessor(config) # 每次都创建实例

return processor.process(image_path)

# 优化后 - 缓存和复用

class OptimizedImageProcessor:

_instance = None

_config = None

@classmethod

def get_instance(cls):

if cls._instance is None:

cls._config = load_config()

cls._instance = ImageProcessor(cls._config)

return cls._instance

def process_image(self, image_path):

processor = self.get_instance()

return processor.process(image_path)

资源文件优化

# 压缩大型参考文档

gzip -9 references/large-documentation.md

# 优化图片资源

optipng assets/icons/*.png

jpegoptim --max=85 assets/images/*.jpg

# 压缩配置模板

tar -czf assets/templates.tar.gz assets/templates/

3. 依赖管理优化

智能依赖加载

dependencies:

required:

- "core-utilities:^1.0.0" # 核心依赖

optional:

- "cloud-storage:>=2.0.0" # 按需加载

- "advanced-analytics:*" # 高级功能

conditional: # 条件依赖

- condition: "environment == 'production'"

dependencies: ["monitoring-tools:^1.5.0"]

- condition: "features.includes('ml')"

dependencies: ["ml-toolkit:>=3.0.0"]

质量保证

1. 自动化测试策略

技能验证测试

# tests/test_skill_validation.py

import pytest

from skill_validator import SkillValidator

class TestSkillValidation:

def setup_method(self):

self.validator = SkillValidator()

def test_metadata_format(self):

"""测试元数据格式正确性"""

skill_path = "path/to/skill"

result = self.validator.validate_metadata(skill_path)

assert result.is_valid

assert "name" in result.metadata

assert "description" in result.metadata

def test_file_structure(self):

"""测试文件结构完整性"""

skill_path = "path/to/skill"

result = self.validator.validate_structure(skill_path)

assert result.has_skill_md

assert result.scripts_valid

assert result.references_accessible

def test_dependency_resolution(self):

"""测试依赖关系解析"""

skill_path = "path/to/skill"

result = self.validator.validate_dependencies(skill_path)

assert not result.has_circular_dependencies

assert result.all_dependencies_available

功能测试

# tests/test_skill_functionality.py

class TestImageProcessorSkill:

def test_basic_resize(self):

"""测试基本调整大小功能"""

processor = ImageProcessor()

result = processor.resize_image(

"test_images/sample.jpg",

"output/resized.jpg",

width=800,

height=600

)

assert result.success

assert result.output_exists

assert result.dimensions == (800, 600)

def test_batch_processing(self):

"""测试批量处理功能"""

processor = ImageProcessor()

result = processor.batch_process(

input_dir="test_images/",

output_dir="output/batch/",

operations=[{"type": "resize", "width": 400}]

)

assert result.success_rate > 0.95

assert result.processed_count > 0

2. 代码质量标准

脚本质量检查

#!/bin/bash

# scripts/quality_check.sh

# Python代码质量检查

echo "Running Python code quality checks..."

flake8 scripts/*.py --max-line-length=88

black --check scripts/*.py

mypy scripts/*.py

# Shell脚本检查

echo "Running shell script checks..."

shellcheck scripts/*.sh

# 文档检查

echo "Running documentation checks..."

markdownlint *.md references/*.md

# 安全检查

echo "Running security checks..."

bandit -r scripts/

性能基准测试

# tests/test_performance.py

import time

import pytest

from memory_profiler import profile

class TestPerformance:

@pytest.mark.performance

def test_image_processing_speed(self):

"""测试图像处理速度"""

processor = ImageProcessor()

start_time = time.time()

processor.resize_image("large_image.jpg", "output.jpg", 1920, 1080)

processing_time = time.time() - start_time

# 性能要求:大图处理不超过5秒

assert processing_time < 5.0

@profile

def test_memory_usage(self):

"""测试内存使用情况"""

processor = ImageProcessor()

# 批量处理100张图片

for i in range(100):

processor.resize_image(f"test_{i}.jpg", f"output_{i}.jpg")

# 内存使用应该保持稳定,不出现内存泄漏

维护策略

1. 生命周期管理

技能生命周期阶段

开发

测试

发布

维护

更新

废弃

监控

优化

迁移指南

替代方案

版本支持策略

# 版本支持矩阵

version_support:

"3.x.x":

status: "active"

support_until: "2025-12-31"

security_updates: true

feature_updates: true

"2.x.x":

status: "maintenance"

support_until: "2024-12-31"

security_updates: true

feature_updates: false

"1.x.x":

status: "deprecated"

support_until: "2024-06-30"

security_updates: true

feature_updates: false

2. 监控和分析

使用情况监控

# monitoring/skill_analytics.py

class SkillAnalytics:

def track_usage(self, skill_name, operation, success, duration):

"""记录技能使用情况"""

metrics = {

'skill_name': skill_name,

'operation': operation,

'success': success,

'duration': duration,

'timestamp': datetime.utcnow(),

'user_agent': self.get_user_context()

}

self.metrics_collector.record(metrics)

def generate_usage_report(self, skill_name, period='30d'):

"""生成使用情况报告"""

data = self.metrics_collector.query(skill_name, period)

return {

'total_uses': len(data),

'success_rate': sum(1 for d in data if d['success']) / len(data),

'avg_duration': sum(d['duration'] for d in data) / len(data),

'popular_operations': self.get_top_operations(data),

'error_patterns': self.analyze_errors(data)

}

性能监控

# monitoring/performance_monitor.py

class PerformanceMonitor:

def __init__(self):

self.thresholds = {

'response_time': 5.0, # 秒

'memory_usage': 1024, # MB

'error_rate': 0.05 # 5%

}

def check_performance(self, skill_name):

"""检查技能性能指标"""

metrics = self.get_recent_metrics(skill_name)

alerts = []

if metrics['avg_response_time'] > self.thresholds['response_time']:

alerts.append(f"High response time: {metrics['avg_response_time']:.2f}s")

if metrics['peak_memory'] > self.thresholds['memory_usage']:

alerts.append(f"High memory usage: {metrics['peak_memory']}MB")

if metrics['error_rate'] > self.thresholds['error_rate']:

alerts.append(f"High error rate: {metrics['error_rate']:.1%}")

return alerts

3. 社区协作

贡献指南

# CONTRIBUTING.md

## 如何贡献

### 报告问题

1. 检查现有 Issues 避免重复

2. 使用问题模板提供详细信息

3. 包含复现步骤和环境信息

### 提交改进

1. Fork 项目并创建特性分支

2. 遵循代码规范和测试要求

3. 提交 Pull Request 并描述变更

### 代码审查标准

- 功能正确性验证

- 代码质量和规范检查

- 性能影响评估

- 文档完整性确认

社区反馈机制

# 反馈收集配置

feedback_channels:

github_issues:

url: "https://github.com/org/skills/issues"

types: ["bug", "enhancement", "question"]

community_forum:

url: "https://forum.company.com/skills"

categories: ["general", "development", "showcase"]

direct_contact:

email: "skills-team@company.com"

response_time: "48h"

安全最佳实践

1. 安全配置

敏感信息处理

# 避免在配置中硬编码敏感信息

❌ 错误做法:

database:

host: "prod-db.company.com"

username: "admin"

password: "secret123"

✅ 正确做法:

database:

host: "${DB_HOST}"

username: "${DB_USER}"

password: "${DB_PASSWORD}"

权限最小化

# scripts/secure_processor.py

import os

import stat

class SecureFileProcessor:

def __init__(self):

# 设置安全的文件权限

self.secure_permissions = stat.S_IRUSR | stat.S_IWUSR # 仅所有者读写

def create_temp_file(self, content):

"""创建安全的临时文件"""

temp_path = "/tmp/secure_temp_file"

# 创建文件并设置安全权限

with open(temp_path, 'w') as f:

f.write(content)

os.chmod(temp_path, self.secure_permissions)

return temp_path

def validate_input(self, file_path):

"""验证输入文件安全性"""

# 检查路径遍历攻击

if ".." in file_path or file_path.startswith("/"):

raise SecurityError("Invalid file path")

# 检查文件类型

allowed_extensions = {'.jpg', '.png', '.gif', '.pdf'}

if not any(file_path.endswith(ext) for ext in allowed_extensions):

raise SecurityError("File type not allowed")

return True

2. 输入验证

参数验证

# validation/input_validator.py

from typing import Any, Dict, List

import re

class InputValidator:

def __init__(self):

self.validation_rules = {

'email': r'^[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+.[a-zA-Z]{2,}$',

'filename': r'^[a-zA-Z0-9._-]+$',

'url': r'^https?://[^s/$.?#].[^s]*$'

}

def validate_parameters(self, params: Dict[str, Any], schema: Dict[str, Any]) -> bool:

"""根据模式验证参数"""

for param_name, param_value in params.items():

if param_name not in schema:

raise ValidationError(f"Unknown parameter: {param_name}")

param_schema = schema[param_name]

# 类型检查

if not isinstance(param_value, param_schema['type']):

raise ValidationError(f"Invalid type for {param_name}")

# 值范围检查

if 'min' in param_schema and param_value < param_schema['min']:

raise ValidationError(f"{param_name} below minimum value")

if 'max' in param_schema and param_value > param_schema['max']:

raise ValidationError(f"{param_name} above maximum value")

# 正则表达式验证

if 'pattern' in param_schema:

pattern = self.validation_rules.get(param_schema['pattern'], param_schema['pattern'])

if not re.match(pattern, str(param_value)):

raise ValidationError(f"Invalid format for {param_name}")

return True

通过遵循这些最佳实践,开发者可以创建高质量、安全可靠的 Skills,为 AI Agent 提供强大而稳定的能力扩展。这些实践不仅提高了 Skills 的质量,也为整个系统的可维护性和可扩展性奠定了坚实的基础。

总结

Skills 系统作为 AI Agent 的模块化能力扩展机制,通过标准化的配置格式和渐进式加载策略,有效解决了 AI 在特定领域的专业化需求。本文从介绍、原理、格式、实例到最佳实践,全面阐述了 Skills 系统的设计思想和实现方法。

通过学习和应用这些知识,开发者可以:

- 理解 Skills 系统的核心价值和应用场景

- 掌握 Skills 的标准化开发流程

- 创建高质量、可维护的技能模块

- 构建专业化的 AI Agent 解决方案

Skills 系统的成功在于其简洁而强大的设计理念:将复杂的专业知识标准化、模块化,让 AI Agent 能够快速获得专业领域的能力。随着 AI 技术的不断发展,Skills 系统将继续演进,为构建更加智能和专业的 AI 应用提供坚实的基础。